Quick Facts

- Category: AI & Machine Learning

- Published: 2026-05-01 05:14:07

- 10 Crucial Facts About the Increasingly Competitive NIH Grant Landscape

- Unexpected Generosity: InXile Lets Gamers Keep Freely Acquired Wasteland Remastered

- Motorola razr fold: The Book-Style Foldable That Redefines Expectations

- Revolutionizing Facebook Groups Search: Unlocking Community Knowledge Through Hybrid Retrieval

- Chipotle Sales Surprise Wall Street, Signaling Price Relief for Lunch Crowds

Introduction: The Next Frontier in Ad Recommendation

Meta continues to push the boundaries of AI-driven recommendation systems, aiming to enhance user experiences and advertiser outcomes. Their latest breakthrough, the Meta Adaptive Ranking Model, addresses a critical challenge: scaling runtime models to the size and complexity of large language models (LLMs) while maintaining the speed and cost-efficiency required for billions of daily users. This article explores the model’s core innovations, how it resolves the inference trilemma, and the tangible results it delivers.

The Inference Trilemma: A Fundamental Challenge

As Meta scales its ads recommender systems to LLM-scale, they encounter a pressing issue known as the inference trilemma. This is the delicate balance between:

- Model complexity – the need for deeper understanding of user interests and intent,

- Latency constraints – requiring sub-second response times for real-time ad serving,

- Cost efficiency – operating at global scale without prohibitive compute and memory costs.

Traditional "one-size-fits-all" inference approaches fail to satisfy all three simultaneously. Meta recognized that a smarter, adaptive strategy was needed to bend the inference scaling curve.

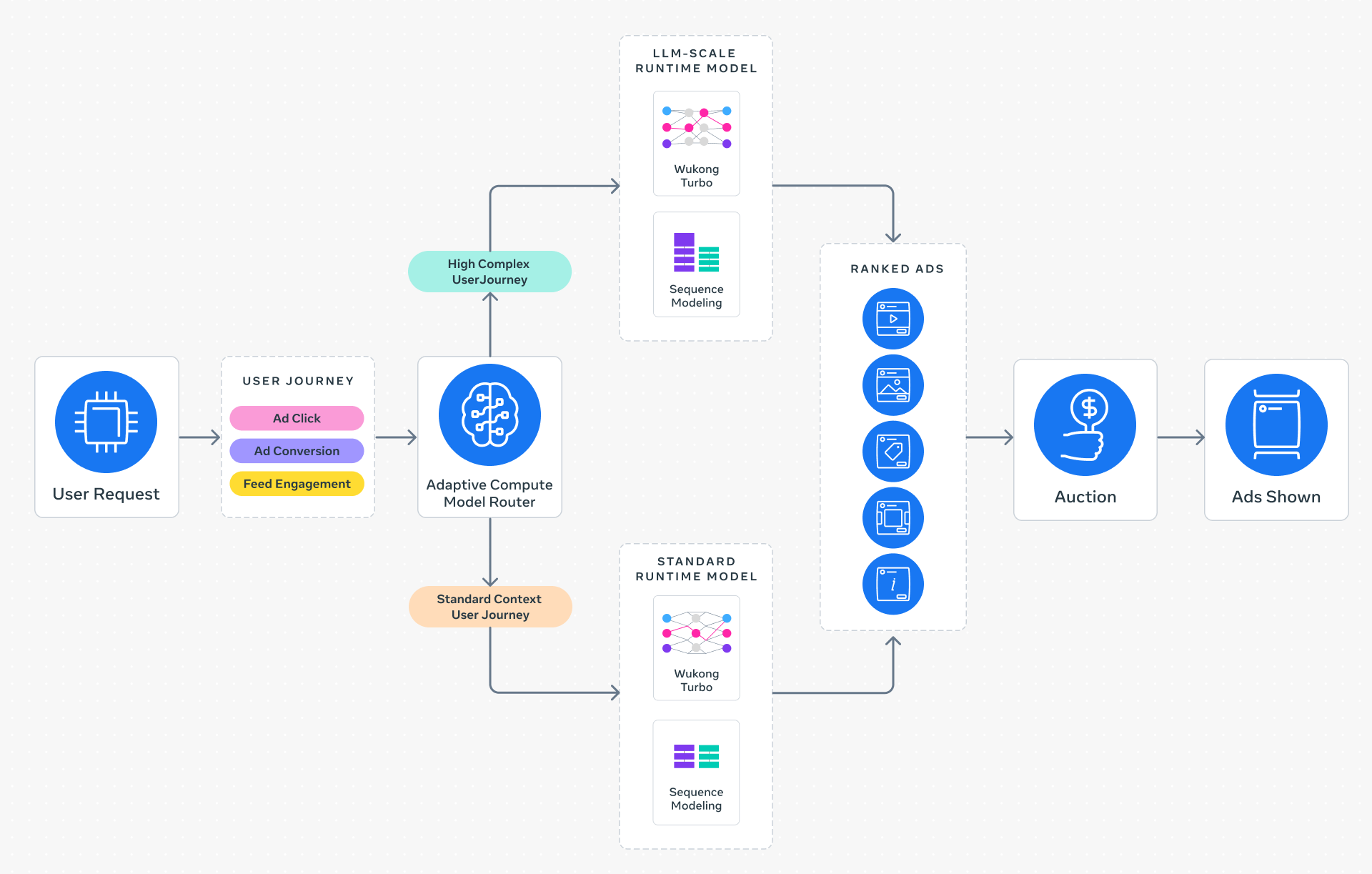

Meta Adaptive Ranking Model: A Dynamic Solution

The Adaptive Ranking Model replaces static inference with intelligent request routing. Instead of applying a uniform model to every ad request, the system dynamically matches model complexity to the user’s context and intent. This ensures each request is served by the most effective yet efficient model, maintaining sub-second latency while delivering a high-quality experience for every person.

How It Works

The system analyzes user signals—such as recent activity, session context, and historical behavior—to determine the appropriate level of model depth. For straightforward requests, a lighter model suffices; for complex or high-value interactions, the full LLM-scale model is deployed. This targeted approach saves compute resources without sacrificing accuracy.

Three Key Innovations Behind the Model

To serve LLM-scale models at Meta’s massive scale, the team rethought the entire inference stack. Three innovations stand out:

1. Inference-Efficient Model Scaling

By adopting a request-centric architecture, the Adaptive Ranking Model runs an LLM-scale model at sub-second latency. This shift allows a deeper understanding of user interests and intent without compromising the real-time experience. The architecture prioritizes the most relevant computations for each request, avoiding unnecessary overhead.

2. Model/System Co-Design

Meta developed hardware-aware model architectures that align model design with the capabilities and limitations of the underlying hardware and silicon. This co-design approach significantly improves hardware utilization across heterogeneous environments—from CPUs to specialized accelerators—maximizing throughput and minimizing waste.

3. Reimagined Serving Infrastructure

The serving infrastructure leverages multi-card architectures and hardware-specific optimizations. This enables scaling to approximately 1 trillion parameters (O(1T)), allowing Meta to serve LLM-scale runtime recommender models with unprecedented efficiency. The infrastructure is built to handle the massive computational demands while keeping costs in check.

Real-World Impact: Results and Performance

Since its launch on Instagram in Q4 2025, the Adaptive Ranking Model has demonstrated significant improvements:

- +3% increase in ad conversions for targeted users,

- +5% increase in ad click-through rate (CTR).

These gains are achieved while maintaining system-wide computational efficiency, ensuring superior performance for businesses of all sizes. By integrating LLM-scale intelligence into the ad stack, Meta delivers higher advertiser value without degrading user experience.

Conclusion: Bending the Curve

The Meta Adaptive Ranking Model is a pioneering approach that effectively bends the inference scaling curve. It resolves the trilemma by dynamically adjusting model complexity per request, co-designing with hardware, and reimagining the serving infrastructure. As Meta continues to lead in AI-driven recommendation systems, this model sets a new standard for balancing performance, latency, and cost at global scale. For advertisers, it means better results; for users, more relevant ads—and for the industry, a clear path forward.