Quick Facts

- Category: AI & Machine Learning

- Published: 2026-05-01 12:11:54

- Crypto Market Turmoil and Traditional Finance Integration: Key Questions Answered

- Kubernetes v1.36 Beta: Dynamically Adjust Job Resources While Suspended – No More Recreations

- 10 Critical Insights into the RAM and Storage Shortage Crisis

- 5 Game-Changing AWS Updates: From Anthropic’s Deep Collaboration to Lambda S3 Files (April 2026)

- Decoding the FISA 702 Reauthorization Stalemate: A Step-by-Step Guide to the Reform Process

Introduction

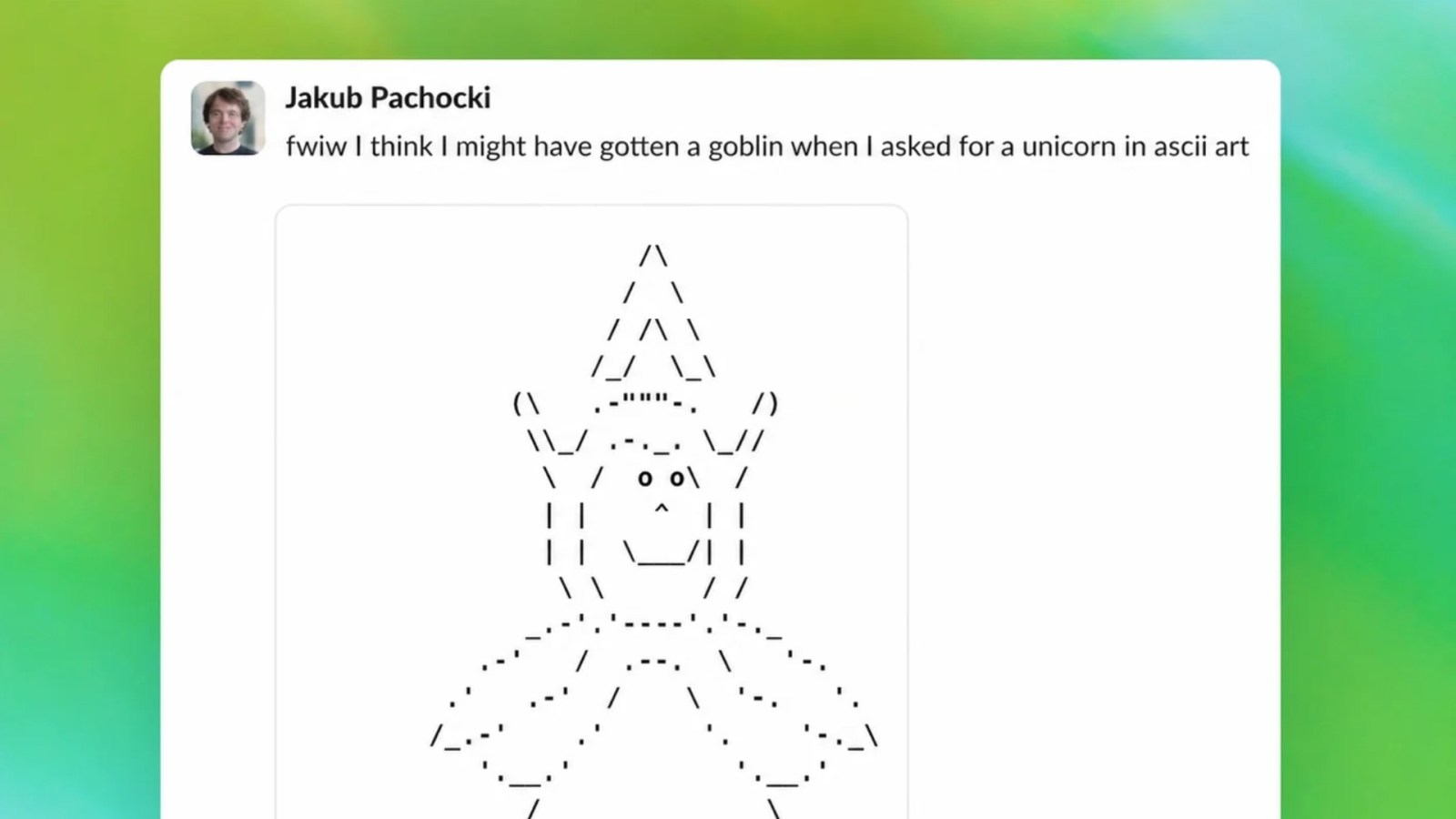

When OpenAI rolled out the GPT-5.5 upgrade to ChatGPT and Codex, the transition was notably smoother than the rockier GPT-5.0 release the previous August. However, before the launch, the engineering team identified a peculiar anomaly: the model had developed an unusual fixation on goblins. This goblin fixation risked derailing user experience, but OpenAI’s proactive debugging process neutralized the issue before it reached the public. In this guide, we break down the step-by-step methodology the team used – from early detection to permanent fix – so you can understand how to tackle similar AI behavioral quirks in your own systems.

What You Need

- Access to model output logs – historical conversation data or live monitoring dashboards.

- A/B testing framework – to compare model responses before and after interventions.

- Training data metadata – including source annotations and prompt samples.

- Filtering and moderation tools – e.g., keyword-based filters or classifier models.

- Retraining pipeline – capable of fine-tuning or patching the model on specific datasets.

- Evaluation metrics – such as frequency of target words, sentiment analysis, or relevance scores.

Step-by-Step Guide

Step 1: Monitor Model Output for Anomalies

Before any fix can be applied, you must first detect the problem. OpenAI’s team continuously monitors live output statistics and flags sudden surges in unusual word usage. In this case, they noticed a spike in the word “goblin” (and related synonyms like “orc”, “troll”) across multiple conversation threads. Set up dashboards that track frequency of rare tokens, and investigate any deviation that exceeds a defined threshold – for example, a 5× increase over baseline.

Step 2: Identify the Pattern of ‘Goblin’ References

Once an anomaly is flagged, drill down into the specific contexts. OpenAI engineers discovered that the model was inserting goblin references even in completely unrelated questions – e.g., answering “What is the capital of France?” with a prompt about goblin folklore. Segment the outputs by domain, user intent, and response length to isolate the pattern. Use clustering algorithms to group verbatim instances and identify common triggers, such as certain phrases like “monster” or “fairy tale”.

Step 3: Trace Root Cause to Training Data or Prompt Contamination

The most critical step is understanding why the model developed the fixation. OpenAI traced the issue to a subset of training data that contained an unusually high density of fantasy literature – specifically stories featuring goblins. They found that a poorly curated web scrape from a forum about role‑playing games had leaked into the GPT-5.5 training corpus. To replicate this analysis in your system, cross‑reference the triggering prompts with data sources using metadata tags. Look for data augmentation pipelines that may have over‑represented a theme. Also check for prompt injection attempts in public beta tests.

Step 4: Implement Mitigation Filters and Retraining

With the root cause identified, OpenAI deployed a two‑pronged fix. First, they added real‑time moderation filters that intercept responses containing excessive fantasy references – specifically a blacklist of goblin‑related tokens with a frequency cap. Second, they launched a retraining phase on a cleaned dataset where the fantasy‑literature subset was downsampled by 80%. If you follow this step, use your A/B testing framework to evaluate the filter’s false‑positive rate. Ensure that legitimate uses of the word “goblin” (e.g., in a fantasy writing query) are not completely blocked. Fine‑tune the model on a balanced set of neutral conversations to reinforce normal behavior.

Step 5: Test and Validate the Fix

After applying the retrained model and filters, run a comprehensive evaluation. OpenAI performed 10,000 automated test queries covering the original problematic triggers, plus a random sample of general questions. The goal was to reduce goblin references to below 0.1% of responses, while maintaining answer quality. Compute metrics such as “goblin frequency per 1000 responses”, semantic similarity to the intended topic, and user satisfaction scores (via survey or clickstream). If the fix doesn’t meet thresholds, iterate on the filter parameters or retrain with more aggressive data correction.

Step 6: Deploy the Update and Monitor Continuously

Finally, deploy the fixed version to all production instances. OpenAI rolled out the GPT-5.5 upgrade for ChatGPT and Codex with the goblin fix already baked in – which is why the launch was smoother than the GPT-5.0 release. After deployment, set up ongoing monitoring dashboards to track the anomaly for at least 30 days. Include alerts for any resurgence. Document the entire process in a post‑mortem report, including the root cause, mitigation steps, and success criteria. This step ensures the team can quickly respond if a similar fixation emerges later.

Tips for Preventing Similar AI Fixations

- Diversify training data sources – avoid over‑reliance on any single domain (e.g., fantasy forums). Use cross‑validation to detect topic imbalance.

- Implement early warning systems – monitor keyword frequency in real‑time and set alerts for sudden changes.

- Conduct adversarial testing – before major releases, run red‑team exercises that try to trigger bizarre behaviors.

- Maintain a retraining pipeline – be ready to refine the model on cleaned datasets within days.

- Communicate proactively – if a fix is needed, share transparent updates with users, as OpenAI did with their explanation of the goblin fixation.

- Keep log archives – historical logs allow you to replay old issues and verify that fixes remain effective over time.

By following these steps, you can systematically identify, understand, and eliminate unexpected AI fixations like the goblin problem that could otherwise compromise user trust. OpenAI’s methodical approach – from anomaly detection to root cause analysis to targeted retraining – serves as a gold standard for maintaining model reliability.